VA’s New Power Brokers

Department of Veterans Affairs Chief Information Officer LaVerne Council has a new chief of staff. Kai Fawn Miller, the director of IT Strategic Communication for the Office of Information and Technology (OI&T) will assume the role of chief of staff on June 26, according to an email from Council intercepted by The Situation Report.

“As Chief of Staff, Kai will work directly with the leadership team to ensure that our daily activities are balanced with our overall mission,” Council wrote in an email to staff. “She will make recommendations to help ensure consistency across our divisions’ processes and resources, and she will continue to keep leadership informed of the feedback and ideas from all of our employees in order to bring urgent issues, great innovations, and our biggest successes to light.”

In addition to naming a new chief of staff, Council appointed five new authorizing officials for VA IT systems. According to a June 15 memorandum, Council gave the following senior executives “full authority” to authorize IT systems for operation:

- Ronald Thompson, Principal Deputy Assistant Secretary for Information and Technology

- Susan McHugh-Polley, Deputy Assistant Secretary for Service Delivery and Engineering

- Robert Thomas, Deputy Assistant Secretary for Enterprise Program Management

- Daniel Galik, Associate Deputy Assistant Secretary for Security Operations

- Dominic Cussatt, Executive Director for Enterprise Cybersecurity Strategy

Now the question is: How many VA systems do not have ATOs?

VA Transformation & Strategic Sourcing

Our well-placed moles throughout the VA enterprise have reported that Council is quite pleased with the agency’s progress to date on its so-called transformation efforts. In a June 10 email, Council said OI&T is two quarters ahead of its anticipated plan, meeting four of its seven transformation milestones.

One of those milestones is the formation at a Strategic Sourcing initiative.

“Effective immediately, our IT acquisitions team–led by Luwanda Jones–will transition to be the first fully-staffed function in the strategic sourcing organization,” Council wrote. “As this team continues to oversee the effective execution of our IT acquisitions for the rest of the fiscal year, they will also play a vital and pathfinding role in the formation of the strategic sourcing office. Until our DCIO for strategic sourcing is hired, Luwanda will report directly to me.”

The goal of the new strategic sourcing office is to improve VA OI&T’s speed to market, ensure compliance with the Federal Information Technology Acquisition Reform Act (FITARA), and foster the most responsible allocation of taxpayer resources.

The goal of the new strategic sourcing office is to improve VA OI&T’s speed to market, ensure compliance with the Federal Information Technology Acquisition Reform Act (FITARA), and foster the most responsible allocation of taxpayer resources.

Brian Burns Update

It’s time for your humble correspondent to eat crow. I recently predicted that Brian Burns, the former VA chief information security officer, was lining up to possibly take on the new Federal CISO position. Well, my listening post outside VA headquarters has corroborated signals coming out of the New Executive Office Building that the Federal CISO announcement isn’t likely coming until July.

And an intercepted cable from the Commandant of the U.S. Coast Guard shows that Burns is now the new deputy CIO of the Coast Guard. He’s been in that position since June 12.

Codename: Thor

The Intelligence Advanced Research Projects Agency is seeking technology that will detect when an individual is attempting to spoof a biometric security system. Known as a biometric Presentation Attack, or PA, the process involves using a prosthetic to conceal a biometric signature or present an alternative biometric signature.

Current methods of detecting a biometric spoof attack rely mostly on what is called “liveness detection”—making sure that a fingerprint presented to a fingerprint reader, for example, is from a finger that is attached to a living human being, or that a retina scan detects pupil dilation (another indicator that the body part is attached to a living subject).

IARPA’s new Thor research project will focus primarily on finger, face, and iris biometric scanning. However, the system (or systems, if different hardware is used for each biometric scan) must be able to accurately assess the integrity of the process without a human in the loop. Potential use cases include travel checkpoints, facility access points, identify verification, and cyber authentication.

Return to Creepy Analytics

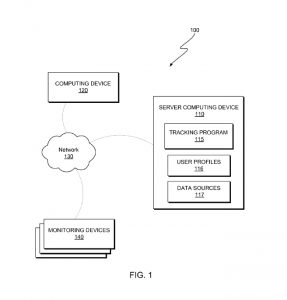

If you’re old enough to remember Admiral John Poindexter‘s Total Information Awareness program at the Pentagon’s Defense Advanced Research Projects Agency, then you’re old enough to be creeped out by IBM‘s latest patent—Monitoring Individuals Using Distributed Data Sources.

According to the patent document, IBM’s invention “relates generally to the field of security, and more particularly to verifying the location of an individual.” What it actually does, however, is overcome the limitations of existing tracking technologies, such as limited range and requiring the person being tracked to carry a GPS-enabled tracking device.

According to the patent document, IBM’s invention “relates generally to the field of security, and more particularly to verifying the location of an individual.” What it actually does, however, is overcome the limitations of existing tracking technologies, such as limited range and requiring the person being tracked to carry a GPS-enabled tracking device.

“An embodiment of the present invention provides multiple sources of information that are used to determine the location of a given individual…[and]…provides authority figures with the option of selecting which types of data sources are used for tracking purposes,” the patent states.

In one scenario detailed in the patent, video cameras that are known to the monitoring program capture images of an individual and feed that image into a facial recognition program. But in cases where the video-capture device is not known to the system, sounds and objects from the surrounding area can be used to determine the location of the person in the video.

“For example, analysis of the video indicates the sound of a train passing by along with lettering that reads ‘XYZ.’ In this example, the user profile includes the names and addresses of the friends of the individual along with a known range of travel of the individual. Tracking program 115 searches the Internet for train tracks, the words ‘XYZ’ and the known range of travel of the individual. Tracking program 115 applies statistical analysis to the results of the search and determines that the individual is most likely at the house of their friend.”

Sure, this sounds great for parents of tweens. But Situation Report is officially creeped out.

Gone Fishing

The Situation Report is off next week. Your humble correspondent will be enjoying surf fishing for record-setting stripers and bluefish, without the tyranny of email access.